Lightweight recommender system for Recsys 2022

A recommender system framework for Recsys 2022 for session-based fashion recommendation that we used in our paper.

This project summarizes our solution for the ACM RecSys Challenge 2022 (team Boston Team Party), described in (Della Volpe et al., 2022). The system is a two-stage, lightweight and scalable pipeline: strong candidate generators propose items, and a GBDT learning-to-rank model blends model scores with content + seasonality features to output the final top-100 list.

Goal: predict the purchased item at the end of each anonymous session and return a ranked top-100 list (evaluated with MRR).

- Complementary candidate generators (sequence, graph, KNN, autoencoders, popularity)

- Feature-rich re-ranking with GBDTs (heterogeneous signals)

- Explicit seasonality via interaction weighting + entropy-based tendency

- Public leaderboard MRR: 0.18800

- Strong accuracy–efficiency tradeoff (practical for real-world pipelines)

- Open-source for reproducibility

Problem

In session-based fashion recommendation, users are anonymous and may have no long-term profile. For each session (a sequence of views ending with a purchase), the task is to produce a top-100 ranking containing the purchased item, scored by Mean Reciprocal Rank (MRR).

Data

The dataset contains 18 months of online fashion sessions (Jan 2020–Jun 2021), with ~1.1M sessions and ~24k items. Each session includes views and a purchase, with timestamps and sparse item attributes.

Approach

1) Candidate generation (fast, diverse experts)

We trained multiple recommenders and merged their top candidates per session. The pool included:

- Sequential: GRU4Rec

- Graph-based: RP3Beta

- Nearest-neighbors: ItemKNN (CF+CBF), UserKNN (CF), plus a content-only KNN for sparse/cold cases

- Autoencoders / shallow models: EASE^R, MultVAE, RecVAE

- Non-personalized: TopPop

To better match fashion dynamics and recency, we used interaction weighting (views vs purchases, cyclic decay, exponential decay) for URM-based models.

2) Feature engineering (content + compact embeddings + seasonality)

Feature engineering was central to the final gain. Highlights:

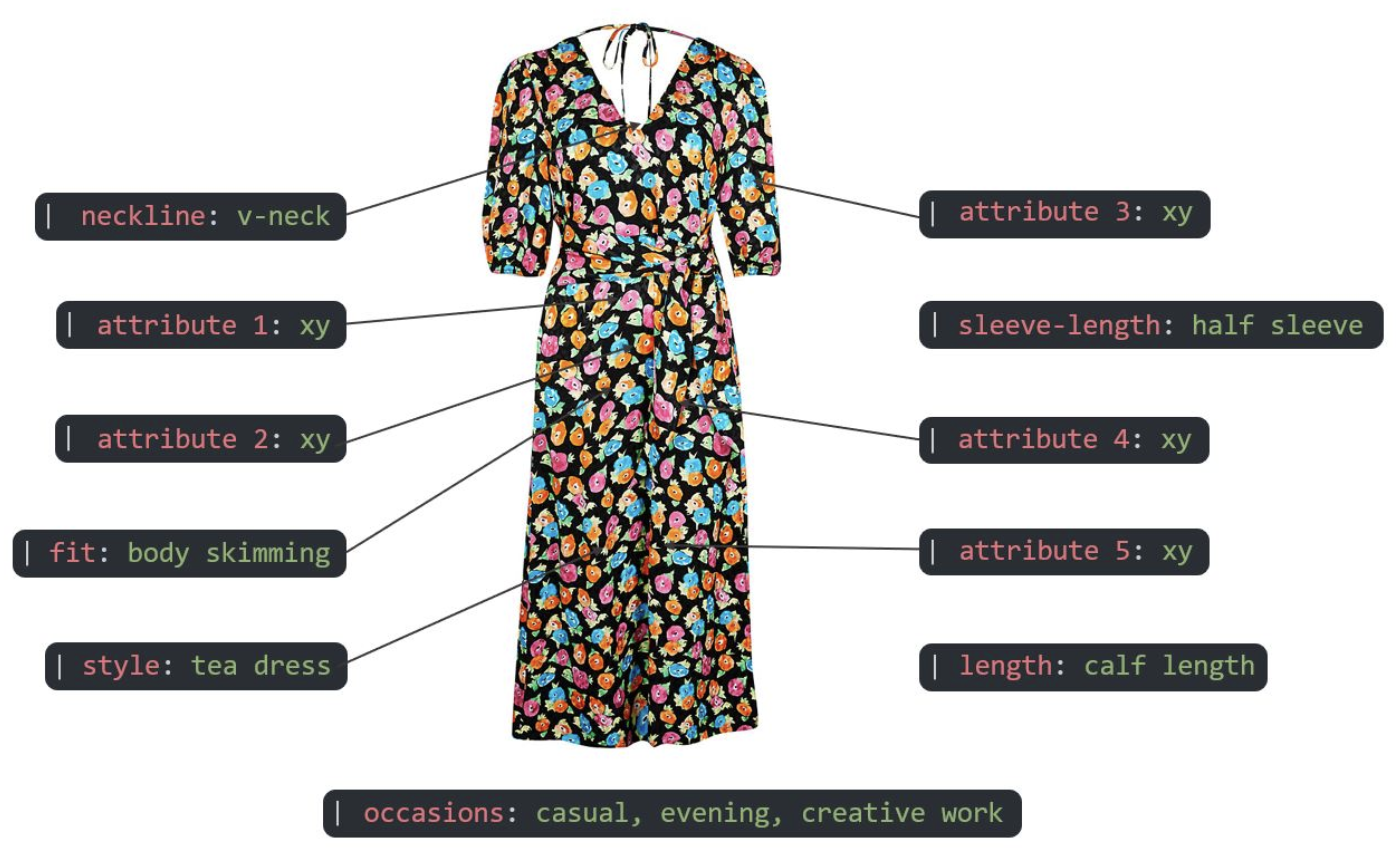

- Item content encoding: multi-label encoding over attribute pairs (904 unique (category,value) tuples).

- Dimensionality reduction: a VAE compresses item content into a compact latent representation (latent size 32).

- Session representations: embeddings aggregated over session items and a RecVAE session encoding used as additional signals.

- Seasonality signal: an entropy-based seasonal tendency feature measuring whether an item is seasonal vs all-season (computed separately for views and purchases).

3) Ranking (GBDT learning-to-rank)

We cast re-ranking as a LETOR task where each row is a (session, candidate item) pair with: model scores + item/session features + seasonality signals.

We trained LambdaMART with LightGBM (and compared to XGBoost), optimizing MAP@100 (chosen for strong correlation with MRR and tool support). LightGBM was both faster and stronger in our experiments.

Solution outline

- Sequence (GRU)

- Graph (RP3Beta)

- KNN + content

- Autoencoders

- TopPop

- Model scores (per candidate)

- Item attributes + embeddings

- Session embeddings

- Seasonality tendency

- LambdaMART (LightGBM)

- XGBoost baseline

- MAP@100 optimization

Results

- Public leaderboard MRR: 0.18800

- LightGBM ranker outperformed XGBoost in our pipeline (public leaderboard MRR 0.18800 vs 0.18347).

- The final model is competitive, lightweight, and scalable, with each feature family contributing meaningfully to ranking.

Skills developed

- Session-based recommendation: retrieval models spanning sequence-aware, graph-based, KNN, and autoencoder approaches.

- Learning-to-rank with GBDTs: LambdaMART with LightGBM, comparison to XGBoost, MAP@100-driven training.

- Feature engineering at scale: multi-label encoding for sparse taxonomies, embeddings via VAE/RecVAE, score normalization.

- Temporal/seasonal modeling: interaction weighting (views vs purchases, cyclic + exponential decay) and entropy-based seasonal tendency features.

- Reproducible experimentation: hyperparameter tuning with Optuna and validation split design aligned to the challenge protocol.

Artifacts

- Paper: (Della Volpe et al., 2022) (PDF)

- Code: Repository